Classification of Musical Instruments

ERICH M. VON HORNBOSTEL AND CURT SACHS

Classification of Musical

Instruments

TRANSLATED FROM THE ORIGINAL GERMAN*

BY ANTHONY BAINES AND KLAUS P. WACHSMANN

TRANSLATORS’ PREFACE

The revival of a learned treatisea bout half a century after its first appearancei s

an unusual event, and there must be cogent reasons for taking such a step.

In the present case the reasons are not hard to state. No other system of classification

is more frequently quoted, nor has any later system been able to supplant

it. On these grounds alone it would be difficult to write it off as being

out-of-date.

Apart from the arguments of the system itself, the biting comments on

curators and collectors, and on the waywardness of their cataloguing, are as

relevant today as they were fifty years ago. Reed instruments are still apt to be

labelled as trumpets if the bell is flared-there is a dismal case of this in one of

our great museums at present-and the terminology is still at times as muddled

as it was in the many instances of which Hornbostel and Sachs complained;

while as for anthropologists, their publications do not invariably give proof

that all have read their ZeitschriftifuEr thnologie.

It is true that criticisms have been made, and modifications demanded here

and there; even the authors did not subsequently feel themselves rigidly bound

to what they had first stated in 1914, when they also tried to anticipate those

points over which need for revision was most likely to arise. A good account of

these criticisms has been given in Jaap Kunst’s Ethnomusicolog(y3 rd edn., The

Hague, I959). None of the critics, however, could persuade the present translators

that a return to the original text might involve the undesirable resurrection

of some best-forgotten error. On the contrary, the discussions of the

system’s merits or demerits have convinced them that it is necessary for students

to have easy access to the source itself. This is not meant to imply that the

Hornbostel-Sachs tables are in all circumstances easily applied; one need but

think of some of the many varieties of stamping tubes, e.g. of the slender

‘stamping tubes’ of the Shambala of East Africa, who ‘make slits [in the tubes]

and wave them backwards and forwards while dancing, so that the tongues

are caused to vibrate by atmospheric pressure’ (Hornbostel, 1933, p. 296), or

* Erich M. von Horbostel und Curt Sachs, ‘Systematik der Musikinstrumente.

Ein Versuch’, ZeitschriftifJErt hnologieJ,a hrg. I9I4. Heft 4 u. 5. (Berlin,

I914.) The translators are grateful to Professor Georg Eckert, Editor of the

Zeitschriftf,o r his assent to the work’s republication.

3

of the bamboot ubesw hicht hey strikea gainstt he groundo r drumu ponw ith

twigs; or of the stampingt ubeso f theirn ext-doorn eighbourst,h e Pare,w ho

cover the end of the tube that hits the groundw ith a membraneA. re these

cases of Kontamination(s ee below, paragraph 14) of a basic type ‘stamping

tube’, or is the first a type of free aerophone (41 in the tables), the second a

plosive aerophone (413), the third a percussion idiophone (III.2), and the

fourth a membranophone (2z) of sorts?

The original text did not reach a large musical public since it appeared in the

comparative obscurity of an ethnological journal, while also, being written in

German, it did not become as widely known in the English-speaking world as

it might have done otherwise. Thus there is a clear case for now offering an

English translation.T o do so at this moment will serve also as a fitting memorial

to Professor Curt Sachs, who died in 1959. Posterity can pay no higher tribute

to a scholart han to returnt o his and his collaborator’sw ork and put it into the

hands of a wider public than knew it before. It is in this spirit that the English

translation is published.

The text paragraphs were not numbered in the original. Words in square

bracketsa re the authors’i f German,a nd the translatorsi’f otherwise. The translators’

terminology in the tables takes due account of English terms used by

the authors in their various later publications-as Hornbostel in ‘The Ethnology

of African Sound-Instruments’, Africa, vol. VI (London, 1933), glossary,

pp. 303-II; and Sachsi n The Historyo f MusicalI nstrument(sN ew York, 1940),

‘Terminology’, pp. 454-67. Many of their English terms have come into wide

use, and have been kept save in a few cases where a change (even in one case

to French) seemed to the translators unavoidable or greatly preferable. Most

of the more obscure instruments cited in the tables are described by Sachs in

his Real-Lexikon (Berlin, 1913). Footnotes are original unless stated.

CHORDOPHONES One or more strings are stretched between

fixed points

31 Simple chordophones or zithers The instrument consists solely of a

string bearer, or of a string bearer with a resonator which is

not integral and can be detached without destroying the

sound-producing apparatus

3I Bar zithers The string bearer is bar-shaped; it may be a board placed

edgewise

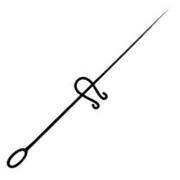

311.1 Musical bows The string bearer is flexible (and curved)

3II.I Idiochord musical bows The string is cut from the bark of the

cane, remaining attached at each end

311.I111 Mono-idiochord musical bows The bow has one idiochord

string only New Guinea (Sepik R.), Togo

20

311.112 Poly-idiochord musical bows or harp-bows The bow has

several idiochord strings which pass over a toothed stick

or bridge W. Africa (Fan)

311.12 Heterochord musical bows The string is of separate material from

the bearer

311.121 Mono-heterochord musical bows The bow has one heterochord

string only

311.121.I Without resonator NB If a separate, unattached resonator is

used, the specimen belongs to 311.121.21. The human

mouth is not to be taken into account as a resonator

3II.I2I.II Without tuning noose Africa( ganza, samuiust, o)

311.121.12 With tuning noose A fibre noose is passed round the string,

dividing it into two sections

South-equatoriAalf rica( n’kungou, ta)

311.121.2 With resonator

3II.I21.21 With independent resonator Borneo (busoi)

311.121.22 With resonator attached

311.121.221 Without tuning noose S. Africa (hade, thomo)

3 11.121.222 With tuning noose S. Africa,M adagasca(rg ubo,h ungo,b obre)

311.122 Poly-heterochord musical bows The bow has several heterochord

strings

311.122. I Without tuning noose Oceania (kalove)

311.122.2 With tuning noose Oceania (pagolo)

311.2 Stick zithers The string carrier is rigid

311.21 Musical bow cum stick The string bearer has one flexible, curved

end. NB Stick zithers with both ends flexible and curved,

like the Basuto bow, are counted as musical bows India

311.22 (True) stick zithers NB Round sticks which happen to be hollow

by chance do not belong on this account to the tube zithers,

but are round-bar zithers; however, instruments in which a

tubular cavity is employed as a true resonator, like the

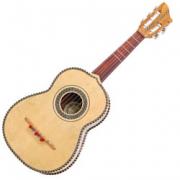

modern Mexican harpa, are tube zithers

311.221 With one resonator gourd India (tuila), Celebes (suleppe)

311.222 With several resonator gourds India (vina)

312 Tube zithers The string bearer is a vaulted surface

312.1 Whole-tube zithers The string carrier is a complete tube

312.11 Idiochord (true) tube zithers

Africaa ndI ndonesia(g onra,t ogo,v aliha)

312.12 Heterochord (true) tube zithers

312.121 Without extra resonator S.E. Asia (alligator)

3I2.122 With extra resonator An internode length of bamboo is placed

inside a palm leaf tied in the shape of a bowl Timor

312.2 Half-tube zithers The strings are stretched along the convex surface

of a gutter

312.21 Idiochord half-tube zithers Flores

21

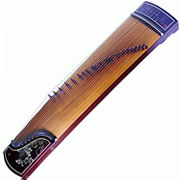

312.22 Heterochord half-tube zithers E. Asia (k’in, koto)

313 Raft zithers The string bearer is composed of canes tied together in

the manner of a raft

313.1 Idiochord raft zithers India,U pperG uinea,C entralC ongo

313.2 Heterochord raft zithers N. Nyasa region

314 Board zithers The string bearer is a board; the ground too is to be

counted as such

314.I True board zithers The plane of the strings is parallel with that of

the string bearer

314.11 Without resonator Borneo

314.12 With resonator

314.12I With resonator bowl The resonator is a fruit shell or similar

object, or an artificially carved equivalent Nyasa region

314.I22 With resonator box (box zither) The resonator is made from

slats Zither,H ackbrettp,i anoforte

314.2 Board zither variations The plane of the strings is at right angles

to the string bearer

314.2I Ground zithers The ground is the string bearer; there is only one

string Malacca, Madagascar

314.22 Harp zithers A board serves as string bearer; there are several

strings and a notched bridge Borneo

315 Trough zithers The strings are stretched across the mouth of a trough

Tanganyika

315.1 Without resonator

315.2 With resonator The trough has a gourd or a similar object attached

to it

316 Frame zithers The strings are stretched across an open frame

3I6.I Without resonator Perhapsa mongstm edievapl salteries

316.2 With resonator W. Africa,a mongstth eK ru (kani)

32 Composite chordophones A string bearer and a resonator are organically

united and cannot be separatedw ithout destroyingt he

instrument

321 Lutes The plane of the strings runs parallel with the sound-table

321.1 Bow lutes [pluriarc] Each string has its own flexible carrier

Africa( akam,k alanguw, ambi)

321.2 Yoke lutes or lyres The strings are attached to a yoke which lies

in the same plane as the sound-table and consists of two

arms and a cross-bar

321.2I Bowl lyres A natural or carved-out bowl serves as the resonator

Lyra, E. African lyre

321.22 Box lyres A built-up wooden box serves as the resonator

Cithara, crwth

321.3 Handle lutes The string bearer is a plain handle. Subsidiary necks,

as e.g. in the Indian prasarini vina are disregarded, as are

also lutes with strings distributed over several necks, like

22

the harpolyrea, nd those like the Lyre-guitars,i n which the

yoke is merely ornamental

321.31 Spike lutes The handle passes diametrically through the resonator

321.311 Spike bowl lutes The resonator consists of a natural or carved-out

bowl Persia, India, Indonesia

321.312 Spike box lutes or spike guitars The resonator is built up from

wood Egypt (rebab)

321.313 Spike tube lutes The handle passes diametrically through the

walls of a tube China, Indochina

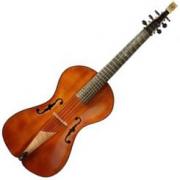

321.32 Necked lutes The handle is attached to or carved from the resonator,

like a neck

321.321 Necked bowl lutes Mandolinet, heorbob, alalaika

321.322 Necked box lutes or necked guitars NB Lutes whose body is

built up in the shape of a bowl are classified as bowllutes

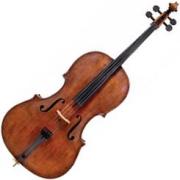

Violin, viol, guitar

322 Harps The plane of the strings lies at right angles to the sound-table;

a line joining the lower ends of the strings would point

towards the neck

322.1 Open harps The harp has no pillar

322.II Arched harps The neck curves away from the resonator

Burma and Africa

322.12 Angular harps The neck makes a sharp angle with the resonator

Assyria,A ncientE gypt,A ncientK orea

322.2 Frame harps The harp has a pillar

322.21 Without tuning action All medievahl arps

322.211 Diatonic frame harps

322.212 Chromatic frame harps

322.212.I With the strings in one plane Mosto f the olderc hromatihca rps

322.212.2 With the strings in two planes crossing one another

TheL yon chromatihca rp

322.22 With tuning action The strings can be shortened by mechanical

action

322.221 With manual action The tuning can be altered by hand-levers

Hook harp, dital harp, harpinella

322.222 With pedal action The tuning can be altered by pedals

323 Harp lutes The plane of the strings lies at right angles to the soundtable;

a line joining the lower ends of the strings would be

perpendicular to the neck. Notched bridge

W. Africa (kasso, etc.)

Suffixes for use with any division of this class (chordophones):

-4 sounded by hammers or beaters

-5 sounded with the bare fingers

-6 sounded by plectrum

-7 sounded by bowing

-71 with a bow

23

-72 by a wheel

-73 by a ribbon [Band]

-8 with keyboard

-9 with mechanical drive

![]()